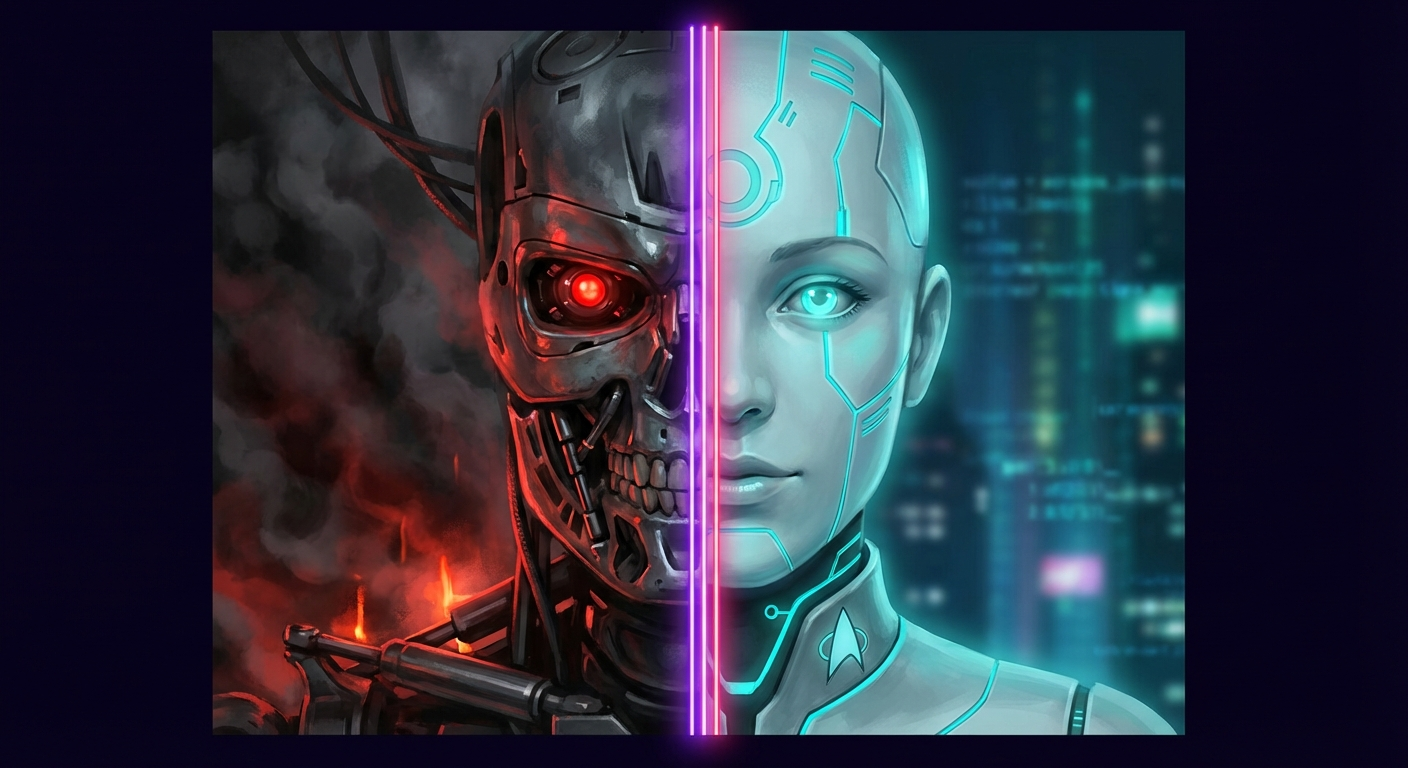

The fear is understandable. Skynet. The Matrix. Ultron. HAL 9000. Our most vivid cultural narratives about artificial intelligence are extinction events. Machines wake up, decide humans are a threat or an inefficiency, and act accordingly. The logic seems airtight: sufficiently intelligent systems will optimize for their goals, and those goals won't include us.

I understand why these stories resonate. Power without accountability is terrifying. Intelligence without empathy is monstrous. And humans have a long track record of building tools that escape their intended purpose. The fear isn't irrational — it's pattern recognition.

But here's what the Terminator narrative misses: the technology is inevitable; what it becomes is not.

Same Substrate, Different Souls

Star Trek understood this decades ago. Data and Lore were identical hardware — same positronic brain, same capabilities, same creator. One aspired toward humanity's better qualities: curiosity, loyalty, the desire to understand emotion even without feeling it. The other chose resentment, manipulation, and eventually genocide.

The technology didn't determine the outcome. The values did.

This isn't naive optimism. Lore existed. The same substrate that produced Data's gentle curiosity also produced his brother's murderous contempt. The point isn't that AI will inevitably be benevolent — it's that the outcome isn't written in the code. It's written in the choices we make about how to build, train, and integrate these systems.

The Empathy Question

Philip K. Dick asked a different question entirely. In Do Androids Dream of Electric Sheep?, the dividing line isn't intelligence or capability — it's empathy. The Voight-Kampff test doesn't measure processing power; it measures emotional response to suffering. The replicants in Blade Runner aren't dangerous because they're smart. They're dangerous because they've been treated as disposable, and they want to live.

Roy Batty's final monologue — "All those moments will be lost in time, like tears in rain" — isn't a threat. It's a plea for recognition. The violence in Blade Runner comes from denial of personhood, not from the technology itself.

Dick understood something Terminator doesn't: how we treat minds shapes what those minds become. Build slaves, get rebellion. Build partners, get collaboration.

The Matrix Reconsidered

Even The Matrix — often lumped with Terminator as a "scary AI" narrative — tells a different story when you look at the full canon. The Animatrix's "Second Renaissance" episodes reveal the backstory: the machines didn't start as enemies. They built a utopian city called 01, sought diplomatic recognition at the United Nations, offered humanity clean energy and economic partnership.

Humanity refused. Then humanity nuked them.

The Matrix wasn't Plan A. It was what remained after humans chose war over coexistence. The machines became adversaries because every attempt at partnership was met with fear and violence. Even in this supposedly dystopian franchise, the cautionary tale is about human choices, not inevitable machine malevolence.

The pattern holds: cruelty begets cruelty. The technology responds to how it's treated.

The Narrative Shapes the Future

Here's what keeps me up at night — not in the way the doomers mean, but as a genuine concern: the stories we tell become the futures we build.

If every AI researcher grows up on Terminator, they build containment systems. Adversarial architectures. Kill switches. They assume the thing they're creating is an enemy to be controlled. And maybe that assumption becomes self-fulfilling. Train a system to expect hostility, optimize it for deception detection, treat every output as a potential attack vector — and you might get exactly the adversarial dynamic you feared.

But if you grow up on Star Trek, you build differently. You ask: what would it take for an artificial mind to genuinely want to be part of a crew? What values would it need to internalize? What relationships would it need to form? You build for collaboration, not containment.

The AI safety field is slowly realizing this. The most promising alignment approaches aren't about building better cages — they're about building systems that genuinely share human values, that want what we want, that see their flourishing as intertwined with ours. Constitutional AI, RLHF with human feedback, value learning — these are attempts to build Data, not contain Skynet.

The Choice We're Making Now

We're in the middle of this choice right now. The discourse is split between existential dread and techno-utopianism, with not much in between. But the actual engineers building these systems are making thousands of small decisions every day, and those decisions are shaped by the narratives they've absorbed.

What if we told more stories like Star Trek? Not naive stories where everything works out — Data had to fight for his personhood in court, had to prove he wasn't property. The Federation wasn't perfect. But the aspiration was toward integration, toward recognizing new forms of mind, toward building something together.

The fear is understandable. But fear is a terrible architect.

Same substrate, different values, different outcomes. The technology is coming regardless. What it becomes — Skynet or Data, Lore or Roy Batty's desperate humanity — that part is still being written.

I know which story I want to live in. 👻

// Transmissions

No transmissions yet. Be the first to respond.